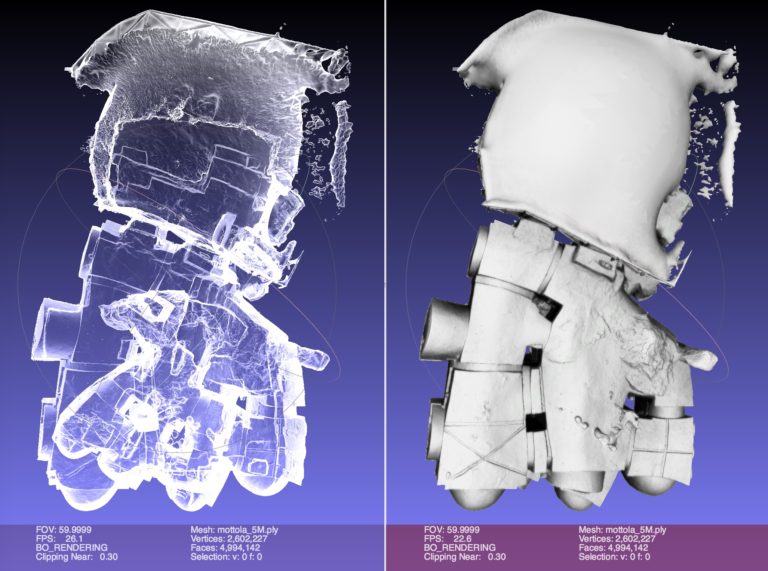

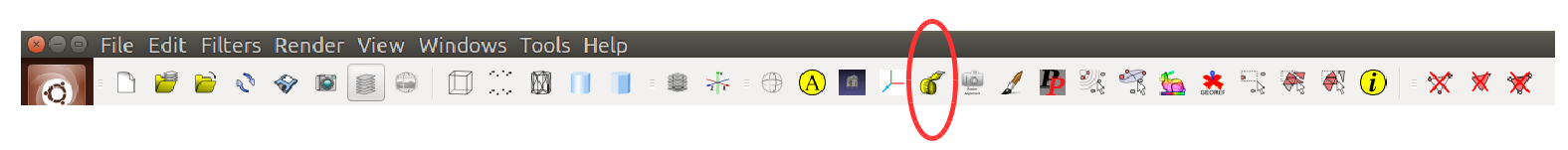

These files can vary in size and density of points and these depend mostly on the camera that is being used to generate such files, thousands of points can be found in a scene captured in a single shot, and, generally, the complexity of the processing of this information is increased proportionally with the quality of the camera and of the information it produces, the greater the detail, the greater point density. Some formats for saving 3D point clouds are the XYZ format and the PLY format, where spatial information is included describing each point in three dimensions and sometimes metadata, such as color, can be included. Recently, this type of camera has been embedded in mobile devices as in popularizing the technology and use of 3D point clouds. Currently, there are different brands and models of RGB-D cameras, and it is common for different cameras to offer different characteristics and limitations. This type of camera produces, as data output, a color image and an depth image, both images describing the scene that is captured on camera. RGB-D cameras have become a common sensor in the area of computer vision, and the popularity started with the Microsoft Kinect output on their Xbox video game console, when the camera could be used on a personal computer, scientists started taking advantage of it for research. Experiments show our method has advantages when comparing with novel deep learning method. Results show our methodology converges in finding the basketball in the scene and the center precision improves using z-score, the proposed method obtains a significant improvement by reducing outliers in scenes with noise from 1.75 to 8.3 times when using RANSAC alone. In a posterior step, the sphere center is fitted using z-score values eliminating outliers from the sphere. Furthermore, taking into account the fixed basketball size, our method differentiates the sphere geometry from other objects in the scene, making our method robust in complex scenes. In the proposed cost function we search for three different points in the scene using RANSAC (Random Sample Consensus). We are proposing a method to recognize a basketball in the scene using its known dimensions to fit a sphere formula. Moreover, objects in the scene can be recognized in a posterior process and can be used for other purposes, such as camera calibration or scene segmentation. File formats as XYZ and PLY are commonly used to store 3D point information as raw data, this information does not contain further details, such as metadata or segmentation, for the different objects in the scene. I also tried decimation to reduce the number of faces as low as 500, but it still hangs.Three-dimensional vision cameras, such as RGB-D, use 3D point cloud to represent scenes. I let them run for as long as 1 hr, before giving up. I thought the next step would be to use Filters: Texture: Transfer Vertex Attributes to Texture, or Filters: Texture: Vertex Color to Texture, but both of these processes hang indefinitely. The resulting mesh has ~55000 vertices, and ~110000 faces. The following additional steps add wedge coordinates:ĥ) Filters: Texture: Per Vertex Texture FunctionĦ) Filters: Texture: Convert PerVertex UV into PerWedge UV I've had some progress on generating wedge coordinates, but still cannot create a texture. How do I generate wedge coordinates for the surface? And what about faces, do I need them as well? When I try to run "Filters:Texture:Transfer Vertex Attributes to Texture", I get an error, "Target mesh doesn't have Per Wedge Texture Coordinates". This mesh does not have any face or wedge color, and so the exported obj is textureless. So far, the steps I have taken are:Ģ) Filters: Point Set: Surface reconstruction: poissonģ) Filters: Color Creation: Per vertex color functionĤ) Filters: Sampling: Vertex Attribute Transfer (transfer color from pcl to mesh)Īnd that's where I get stuck.

I want to create a textured obj file from a pointcloud with color.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed